Cambridge Handbook: Guiding Ethical AI in Healthcare Education |

mars 12, 2025 . 4 Minutes read

CIMA Care's Approach to Artificial Intelligence: Implementing Cambridge Handbook Principles in Healthcare Education

At CIMA Care, we are committed to ensuring our digital health tools follow ethical practices. The recently published Cambridge Handbook on AI Ethics helps us examine how we utilize AI and compare it to recommended standards.

AI-Enhanced Learning Curation: Cultivating Reliable Healthcare Knowledge

At CIMA Care, we integrate AI technology with rigorous human oversight throughout our blog articles and CIMA Health Academy courses. By combining human insight and AI analytics, we identify emerging trends and priority topics vital to healthcare professionals seeking to advance child health outcomes. This blended approach helps us generate initial content frameworks highlighting critical discussion areas.

We then compile authoritative information from current, reliable, and evidence-based material for each selected topic and subheading, using AI to structure and organize material effectively. Our quality assurance process includes meticulous fact-checking against established scientific literature, adherence to stringent credibility standards, and a commitment to evidence-based content derived from contemporary research. We ensure our offerings maintain high accuracy and educational integrity through transparent citation practices and comprehensive human expert review.

CIMA Care's content development workflow: Combining AI efficiency with human expertise for healthcare excellence.

Aligning with Ethical AI Frameworks

The Cambridge Handbook of the Law, Ethics, and Policy of Artificial Intelligence [1] lays out foundational principles for accountable and transparent AI development. Below, we explore how CIMA Care’s practices meet these guidelines, focusing on key concepts such as responsibility, data protection, and regulatory compliance:

a) Managing Healthcare Data Responsibly with AI

• Moral Responsibility: According to the Cambridge Handbook, organizations that knowingly deploy AI—especially in high-stakes areas such as healthcare—bear responsibility for any foreseeable adverse outcomes. This responsibility entails understanding an AI system's potential risks and taking proactive measures to mitigate them. Consequently, AI decision-makers must ensure strong management and accountability mechanisms are in place before implementation. CIMA Care actively determines when and how AI is applied, ensures a deep understanding of its limitations, and assumes accountability for all published materials. This approach aligns with the Cambridge Handbook’s principle that moral responsibility is not just about causal connection but also about knowledge and the ability to foresee potential consequences.

• Special Protections: The Handbook designates health-related information as ”special category data” warranting enhanced protections under GDPR provisions. Adhering to these regulatory standards, CIMA Care implements rigorous data protection protocols when leveraging AI for educational content preparation. Our practices include proper anonymization and pseudonymization of illustrative cases—processes explicitly identified in the Handbook as ”minimum measures to always consider before sharing health-related data for reuse”—thereby ensuring compliance with established regulatory frameworks governing health information management.

b) Consumer Protection Implications for AI in Healthcare

According to The Cambridge Handbook, healthcare consumers face unique challenges when interacting with AI-driven tools. At CIMA Care, we address these key considerations to ensure user trust and safeguard patient autonomy:

• Algorithmic transparency:

One recurring concern in the Handbook is the “black box problem,” where consumers have limited insight into how AI systems reach decisions. To mitigate this, CIMA Care adopts a thorough human review of AI-assisted health education modules, offers clear evidence-based material citations, and explains how suggestions are derived.

• Dark patterns and manipulation risks:

The Handbook warns that AI-powered systems can ”influence consumers into making choices that do not serve their best interests.” CIMA Care reduces manipulation risks by prioritizing scientific validity and medical accuracy over mere engagement metrics. This evidence-based focus helps us foster informed decision-making and maintain the trust of healthcare consumers

c) Media Ethics in Health Communication

• Promoting Trustworthy Content:

According to The Cambridge Handbook, using AI to prioritize content can foster reliable information sharing. In line with this principle, CIMA Care employs AI-driven tools to assist in developing our materials while maintaining human supervision and revisions at every step. This ensures we never lose sight of our commitment to evidence-based content and user well-being.

• Implementing ‘Procedural Safeguards’:

The Handbook also highlights the value of “procedural safeguards”—clearly defined steps that guarantee content is created responsibly and transparently. In practice, CIMA Care’s healthcare professionals review every AI-assisted article or post to validate its accuracy, reliability, and relevance before publication with additions or removals according to the reliable research findings in each section. By embedding expert oversight, we maintain high-quality, ethical health communication and ensure AI remains a supportive tool rather than a substitute for professional conclusions.

Ethical AI in healthcare: Balancing data security, consumer protection, and transparent communication.

Aligning with Ethical AI Frameworks

d) Fairness and Representation

Ensuring equitable AI practices requires attention to both fair procedures and fair outcomes.

• Balancing Fair Procedures: The Cambridge Handbook explains that fairness requires both fair procedures and fair outcomes. Fair procedures ensure consistency, rational justification, and transparency in decision-making, while fair outcomes consider the broader social and economic impacts on different groups, addressing potential disparities and power imbalances.

• Addressing “Cultural Imperialism”: In media contexts, the Handbook warns of the danger of “cultural imperialism,” where a “dominant group’s experience and culture is universalized and established as the norm.” At CIMA Care, we strive for fairness by ensuring the human direction of AI-generated content and systematically reviewing outputs against trusted evidence-based material worldwide to prevent bias. This approach aligns with the relational understanding of fairness described in The Cambridge Handbook emphasizing not only accuracy but also power imbalances, participatory decision-making, and the broader societal impact of AI-generated decisions.

e) Responsibility and Transparency

Upholding multi-level transparency in AI fosters trust and accountability, reflecting the Handbook’s call for responsible system governance in educational contexts.

• Commitment to Multi-dimensional AI Transparency: The Handbook emphasizes that transparency in AI systems can take several forms: internal awareness within the organization, open communication with users, and clarity regarding external AI providers. Aligned with these principles, CIMA Care clearly communicates our AI use by informing team members about system capabilities and limitations and by giving our users appropriate disclosures on how educational content, images, videos, and other materials are developed or delivered. We recognize that meaningful transparency builds trust and enables informed decision-making, thereby adhering to established ethical frameworks for responsible AI usage in educational settings.

f) Design for Values in Practice

By embedding ethical principles from the outset, the “Design for Values” approach ensures AI and other technologies uphold integrity while respecting diverse needs in healthcare contexts.

• Holistic, value-centered Design: The Handbook emphasizes that “technology” inherently embodies values through its design choices. The “Design for Values” approach advocates proactively weaving ethical considerations into development rather than treating them as afterthoughts. Guided by these ideas, CIMA Care establishes clear ethical priorities shaping our educational platform. For instance, we build inclusivity, accuracy, and trustworthiness into our vaccination program content by carefully considering diverse cultural perspectives and accessibility needs. Reflecting the Handbook’s guidance on “context-specificity” in educational applications, we tailor resources to local healthcare realities and respect users’ autonomy in distinct regions. By centering human needs and values in our design process, we support healthcare professionals with evidence-based material attuned to the unique contexts in which they work.

Core AI ethics principles: balancing fairness, collaborative responsibility, and design for values.

Implementing AI-Assisted Learning with Human Oversight

At CIMA Health Academy, we use artificial intelligence to enhance the preparation of course materials but rely on the non-AI “Arena” platform for content delivery, ensuring that automation never replaces professional decisions.

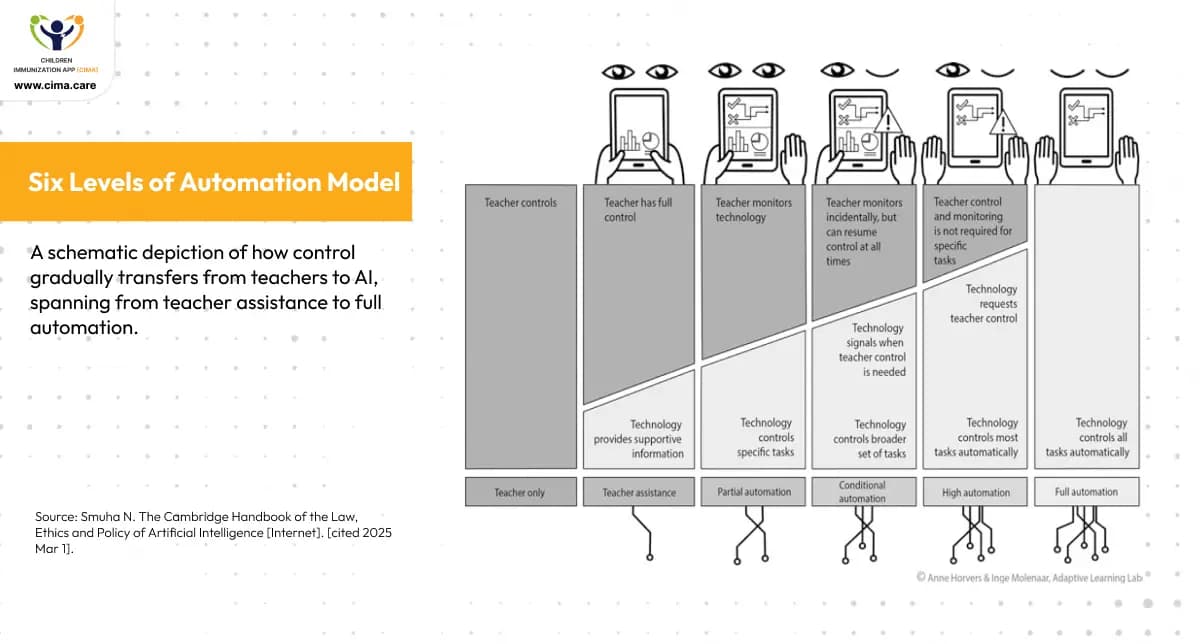

- Where We Stand on the Six Levels of Automation: As depicted in the figure, according to the Cambridge Handbook, the “Six Levels of Automation Model” illustrates how control can shift from teachers to technology/AI. CIMA Health Academy's system rests at the “teacher assistance level,” where the Arena platform is not AI-based, and tasks such as quiz grading and certificate generation are based on human-defined rules and oversight, without artificial intelligence taking over any control. At every step, experts maintain authority over curriculum decisions and quality checks—reaffirming the Handbook’s view that “the goal is not to reach full automation” in education.

Six Levels of Automation Model: CIMA operates at the not AI-based ”teacher assistance” level of automation, maintaining human control.

Conclusion: CIMA Care’s Alignment with Responsible AI Practices

The Cambridge Handbook highlights key considerations for AI in healthcare, emphasizing that AI should support rather than displace human expertise, employ robust procedural safeguards, and respect cultural diversity. CIMA Care embodies these principles through:

- Human-Centered Development: We ensure healthcare professionals guide content creation and review, reflecting the Handbook’s directive that AI should “assist, not replace human educators.”

- Evidence-Based Methodology: Our reliance on vetted evidence-based material, including WHO, UNICEF, and UNODC, performs the Handbook’s emphasis on “procedural safeguards” and accurate, justifiable content.

- Fairness and Representation: By integrating multiple global perspectives, we heed the Handbook’s warning against “cultural imperialism,” ensuring diverse voices shape our AI-assisted materials.

Together, these practices demonstrate how CIMA Care responsibly leverages AI to enhance global health education and vaccine confidence, maintaining professional oversight, and safeguarding ethical standards. As we expand our digital health solutions, we remain committed to ensuring AI serves as a support tool—never supplanting expert judgment—in advancing equitable healthcare worldwide.

To learn more about our responsible digital health initiatives, visit:

https://www.linkedin.com/company/cima-care-gmbh/posts/?feedView=all

CIMA Care's principles align with Cambridge Handbook guidelines for responsible AI in healthcare.

Image References

- 1- Adobe Stock. White banner background vector, abstract graphic design banner pattern background template [Internet]. [cited 2025 Aug 19]. Available from: https://stock.adobe.com/sg/images/white-banner-background-vector-abstract-graphic-design-banner-pattern-background-template/464708115

- 2- Adobe Stock. A digital shield with a medical cross symbol sits on a stethoscope, representing the importance of protecting patient data and ensuring secure healthcare practices [Internet]. [cited 2025 Aug 19]. Available from: https://stock.adobe.com/sg/images/a-digital-shield-with-a-medical-cross-symbol-sits-on-a-stethoscope-representing-the-importance-of-protecting-patient-data-and-ensuring-secure-healthcare-practices/941646518

- 3- Adobe Stock. Understanding the significance of data privacy in the digital age for companies and consumers [Internet]. [cited 2025 Aug 19]. Available from: https://stock.adobe.com/sg/images/understanding-the-significance-of-data-privacy-in-the-digital-age-for-companies-and-consumers/1041291965

- 4- Adobe Stock. Explore the impact of different prevention strategies against cyberbullying through engaging visuals [Internet]. [cited 2025 Aug 19]. Available from: https://stock.adobe.com/sg/images/explore-the-impact-of-different-prevention-strategies-against-cyberbullying-through-engaging-visuals/1031004173

- 5- Adobe Stock. A digital representation of a balancing scale symbolizes justice and fairness in a modern tech environment [Internet]. [cited 2025 Aug 19]. Available from: https://stock.adobe.com/sg/images/a-digital-representation-of-a-balancing-scale-symbolizes-justice-and-fairness-in-a-modern-tech-environment/996534872

- 6- Adobe Stock. Team of entrepreneurs collaborate and develop effective business system, group of senior and young business people join gearwheels as metaphor for good cooperation and teamwork, high angle from above [Internet]. [cited 2025 Aug 19]. Available from: https://stock.adobe.com/sg/images/team-of-entrepreneurs-collaborate-and-develop-effective-business-system-group-of-senior-and-young-business-people-join-gearwheels-as-metaphor-for-good-cooperation-and-teamwork-high-angle-from-above/438090564

- 7- Adobe Stock. Doctor holds a red heart, symbolizing care and compassion in a healthcare setting [Internet]. [cited 2025 Aug 19]. Available from: https://stock.adobe.com/sg/images/doctor-holds-a-red-heart-symbolizing-care-and-compassion-in-a-healthcare-setting/1097021328

Blog Resources

- 1- Smuha N. The Cambridge Handbook of the Law, Ethics and Policy of Artificial Intelligence [Internet]. [cited 2025 Mar 1].

Enjoyed this article?

Share it with your friends on LinkedIn: CIMA Care's Approach to Artificial Intelligence: Implementing Cambridge Handbook Principles in Healthcare Education

Follow us on LinkedIn for more updates and insights: Cima Care GmbH